Professional Experiences

Languages: C++, Python

Frameworks: Qt, MFC, React

Tools: Visual Studio, Qt Creator, TensorFlow

Protocols: I2C, GPIO, Serial

Embedded Systems:

In my professional role, I work almost exclusively designing software for controllers that serve our pinstamp/laser systems. This work is mostly done in C++, with some supporting languages like Qt's QML for GUI creation. I also extensively work with Microsoft Foudnation Class (MFC) to support and further develop our more legacy systems. This includes communication via I2C and GPIO to Servo/Stepper controllers, creation of file system naviagtors, and intigrating database connectivity with the rest of our team.

Web-Apps:

As a personal project, I created a web-application that used Python running a trained Neural Network model which communicate via Flask to a Gunicorn frontend server. I hosted this website through IONOS and used tools such as AWS S3 for data storage. The purpose of this web-app was to predict wildfire occurrence changes on a county-level across the entire United States.

Dataset Creation:

For my web-app, which I named "Wildfire Condition Prediction" and was formally accessible via www.WildfireConditionPrediction.com, I created my own dataset by merging existing Wildfire-Condition datasets as well as historical weather datasets and correlated the time and location of each instance to their respective county, including transposing latitude and longitude to their respective county.

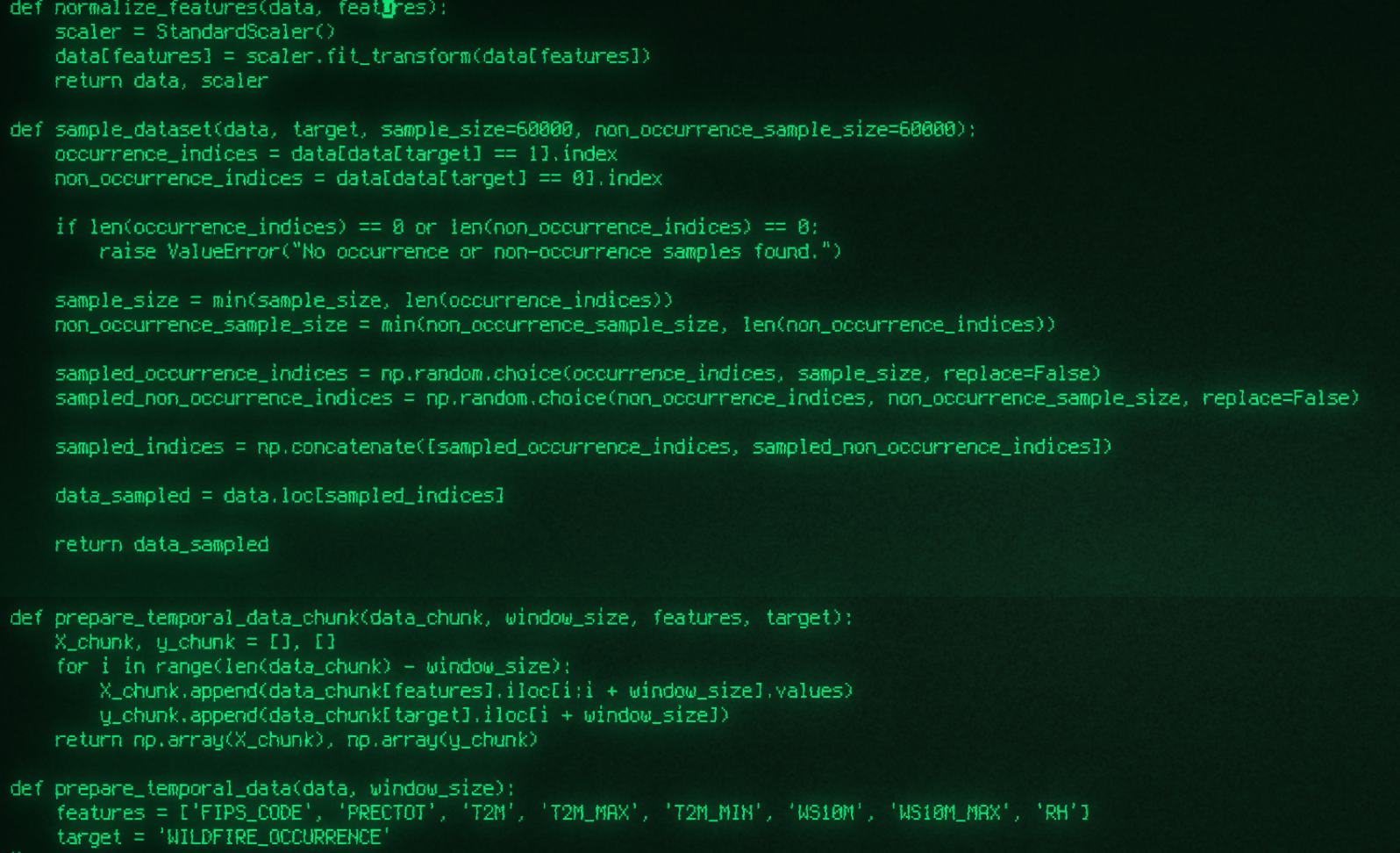

I started off with training a random forest model on a smaller hand-picked dataset and found the results promising -- but I hoped utilizing a neural network would allow for more complex patterns and signed to be detected utilizing weather data leading up to a wildfire occurrence. After both refining then expanding my dataset to include weather data for two-weeks preceding a wildfire event, I eventually ended up with a massive (to me) dataset including hundreds of thousands of lines of data.

Machine Learning

With my dataset in hand, I worked on configuring TensorFlow to run on my Linux Mint operating system, eventually getting Tensorflow to utilize my 3060Ti GPU to train Nerual Netowrk (NN) models over the course of hours or sometimes days even. This, and the Dataset creation, was the longest and sometimes most difficult part of it all -- the backend and frontend servers, web-hosting, was all fairly straightforward and quick. After weeks of adjusting weights, changing how I prepared temporal data and its chunks, I finally came to a model I was confident in. Utilizing split-dataset testing, I found my model would predict wildfire conditions with ~80% accuracy. Given the nearly infinite amount of variables that goes into weather prediction, I was satisfied with these results given it's based on a dataset hand-crafted by me. I implemented this newly trained model by reaching out to Open-Meteo and other weather services to get current & forecasted weather data and using this data to make predictions on wildfire risk conditions. Predictions were displayed as ab interactive map (Leaflet) that when a county or state was selected, would display further details on the current weather predictions, and what made this area a wildfire risk. I have since taken www.WildfireConditionPrediction.com down, but perhaps in the future I will revisit the project once more. :)